———————————————–

UPDATE March 2012 – VMware have just confirmed that the fix will be released as part of vSphere5 U2. Interesting because as of today (March 15th) update 1 hasn’t even been released – how much longer will that be I wonder? I’m also still waiting for a KB article but it’s taking it’s time…

UPDATE May 2012 – VMware have just released article KB2013844 which acknowledges the problem – the fix (until update 2 arrives) is to rename your datastores. Gee, useful… 🙂

———————————————–

For the last few weeks we’ve been struggling with our vSphere5 upgrade. What I assumed would be a simple VUM orchestrated upgrade turned into a major pain, but I guess that’s why they say ‘never assume’!

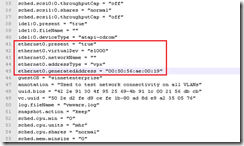

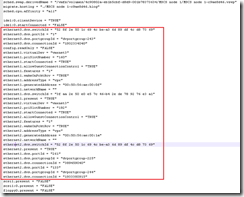

Summary: there’s a bug in the upgrade process whereby NFS mounts are lost during the upgrade from vSphere4 to vSphere5;

- if you have NFS datastores with a space in the name

- and you’re using ESX classic (ESXi is not affected)

Our issue was that after the upgrade completed, the host would start back up but the NFS mounts would be missing. As we use NFS almost exclusively for our storage this was a showstopper. We quickly found that we could simply remount the NFS with no changes or reboots required so there was no obvious reason why the upgrade process didn’t remount them. With over fifty hosts to upgrade however the required manual intervention meant we couldn’ t automate the whole process (OK, PowerCLI would have done the trick but I didn’t feel inspired to code a solution) and we aren’t licenced for Host Profiles which would also have made life easier. Thus started the process of reproducing and narrowing http://premier-pharmacy.com/product/valium/ down the problem.

- We tried online pharmacy australia both G6 and G7 blades as well as G6 rack mount servers (DL380s)

- We used interactive installs using a DVD of the VMware ESXi v5 image

- We used VUM to upgrade hosts using both the VMware ESXi v5 image and the HP ESXi v5 image

- We upgraded from ESXv4.0u1 to ESX 4.1 and then onto ESXiv5

- We used storage arrays with both Netapp ONTAP v7 and ONTAP v8 (to minimise the possibility of the storage array firmware being at fault)

- We upgraded hosts both joined to and isolated from from vCentre

Every scenario we tried produced the same issue. We also logged a call with VMware (SR 11130325012) and yesterday they finally reproduced and identified the issue as a space in the datastore name. As a workaround you can simply rename your datastores to remove the spaces, perform the upgrade, and then rename them back. Not ideal for us (we have over fifty NFS datastores on each host) but better than a kick in the teeth!

There will be a KB article released shortly so until then treat the above information with caution – no doubt VMware will confirm the technical details more accurately than I have done here. I’m amazed that no-one else has run into this six months after the general availability of vSphere5 – maybe NFS isn’t taking over the world as much as I’d hoped! I’ll update this article when the KB is posted but in the meantime NFS users beware.

Sad I know, but it’s kinda nice to have discovered my own KB article. Who’d have thought that having too much space in my datastores would ever cause a problem? 🙂