Summary: At VMworld in August VMware announced their new hyperconverged offering, EVO:RAIL. I found myself discussing this during TechFieldDay Extra at VMworld Barcelona and this post details my thoughts having spent a bit longer investigating. I’m not the first to write about EVO:RAIL so I’ll quickly recap the basics before giving my thoughts and some things to bear in mind if you’re considering EVO:RAIL.

Summary: At VMworld in August VMware announced their new hyperconverged offering, EVO:RAIL. I found myself discussing this during TechFieldDay Extra at VMworld Barcelona and this post details my thoughts having spent a bit longer investigating. I’m not the first to write about EVO:RAIL so I’ll quickly recap the basics before giving my thoughts and some things to bear in mind if you’re considering EVO:RAIL.

Briefly, what is EVO:RAIL?

There’s no point in rediscovering the wheel so I’ll simply direct you to Julian Wood’s excellent series;

There’s no point in rediscovering the wheel so I’ll simply direct you to Julian Wood’s excellent series;

As of October 2014 there are now eight qualified OEM partners although beyond that list there’s very little actual information available yet. Most of the vendors have an information page but products aren’t actually shipping yet and it’s difficult to know how they’ll differentiate and compete with each other. Several partners already have their own offerings in the converged infrastructure space so it’ll be interesting to see how well EVO:RAIL fits into their overall product portfolios and how motivated they are to sell it (good thoughts on that for EMC, HP, and Dell). Unlike their own solutions, the form factor and hardware specifications are largely fixed so it’s going to be management additions (ILO cards, integration with management suites like HP OneView etc), service, and support that vary. For partners without an existing converged offering this is a great opportunity to easily and quickly compete in a growing market segment.

UPDATE 29th July 2015 – HP has now walked away from the EVO:RAIL offering. Interesting that they’ve done so very publicly rather than just letting it wilt on the vine…

Things to note about EVO:RAIL

In my ‘introduction to converged infrastructure’ post last year I listed a set of considerations – let’s run through them from an EVO:RAIL perspective;

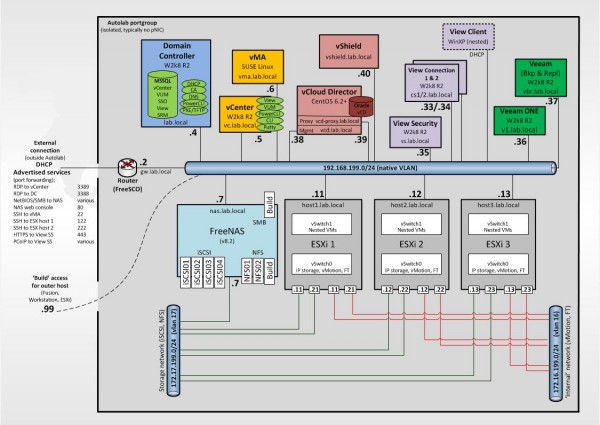

Management. The hyperconverged nature should mean improved management as VMware (and their partners) have done the heavy lifting of integration, licencing, performance tuning etc. EVO:RAIL also offers a lightweight GUI for those that value simplicity while also offering the usual vSphere Web Client and VMware APIs for those that want to use them. This is however a converged appliance and that comes with some limitations – you can manage it using the new HCIA interface or the Web Client but it comes with its own vCSA instance so you can’t add it to an existing vCenter without losing support. It won’t use VUM for patching (although it does promise non-disruptive upgrades) although you can add the vCSA to an existing vCOps instance.

Simplicity. This is the strongest selling point in my opinion – EVO:RAIL is a turnkey deployment of familiar VMware technology. EVO:RAIL handles the deployment, configuration, and management and you can grow the compute and storage automatically as additional appliances are discovered and added. As the technology itself isn’t new there’s not much for support staff to learn, plus there’s ‘one throat to choke’ for both hardware and software (the OEM partner). Some people have pointed out that it doesn’t even use a distributed switch, despite being licenced with Ent+. Apparently the choice of a standard vSwitch was because of a potential performance issue with vDS and VSAN, which eventually turned out not to be an issue. Simplicity was also a key consideration and VMware felt there was no need for a vDS at this scale. I imagine we’ll see a vDS in the next iteration.

Flexibility. This is probably the biggest constraint for customers – it’s a ‘fixed’ appliance and there’s limited scope for change. The hardware and software you get with EVO:RAIL is fixed (4 nodes, 192GB RAM per node, no NSX etc) so even though you have a choice of who to buy it from, what you buy is largely the same regardless of who you choose. There is currently only one model so you have to scale linearly – you can’t buy a storage heavy node or a compute heavy node for example. EVO RAIL is sold 4 nodes at a time and the SMB end of the market may find it hard to finance that kind of CAPEX. As mentioned earlier the partner is responsible for updates (firmware and patching) – you won’t be able to upgrade to the new version of vSphere until they’ve validated and released it for example. Likewise you can’t plug in that nice EMC VNX you have lying around to provide extra storage – you have to use the provided VSAN. Flexibility vs simplicity is always a tradeoff!

Interoperability/integration. In theory this is a big plus for EVO:RAIL as it’s the usual VMware components which have probably the best third party integration in the market (I’m assuming you can use full API access). Another couple of notable integration requirements;

- 10GB networking (ToR switch) is a requirement as it’s used to connect the four servers inside the 2U form factor given the lack of a backplane. You’ll need 8 ports per appliance therefore. I spoke to VMware engineers at VMworld on this and was told VMware looked for a 2u form factor where they could avoid this but couldn’t. Many SMB’s have not adopted 10GB yet so it’s a potential stumbling block – of course partners may use this opportunity to bundle 10GB networking which would be a good way to differentiate their solution.

- IPv6 is required for the discovery feature used when more EVO:RAIL appliances are added. This discovery process is proprietary to VMware though it operates much like Apple’s Bonjour and apparently IPv6 is the only protocol which works (it guarantees a local link address).

Risk. This is always a consideration when adopting new technology but being a VMware backed solution using familiar components will go a considerable way to reducing concern. VSAN is a v1.0 product, as is HCIA http://premier-pharmacy.com/product/lexapro/ although as that’s simply a thin wrapper around existing, mature, and best of breed components it’s probably safe to say VSAN maturity is the only concern for some people (given initial teething issues). Duncan Epping has a blogpost about this very subject but his summary is ‘it’s fully supported’ so make sure you know your own comfort level when adopting new technology.

Cost. A choice of partners is great as it’ll allow customers to leveraging existing relationships. It’s worth pointing out that you buy from the partner so any existing licencing agreements (site licences etc) with VMware probably won’t be applicable. At VMworld I was told VMware have had several customers enquire about large orders (in the hundreds) so it’ll be interesting to see how price affects adoption. I don’t think this is really targeted at service providers and I’ve no idea how pricing would work for them. Having spent considerable time compiling orders, having a single SKU for ordering is very welcome!

Pricing

Talking of pricing, let’s have a look at ballpark costs. I’ve heard, though not been officially quoted, a cost of around €150,000 per 4 node block (or £120,000 for us Brits). This might seem high but bear in mind what you need;

UPDATE: 30th Nov – I realised I’d priced in four Supermicro chassis, rather than one, so I’ve updated the pricing.

- Hardware. Let’s say approx £11k per node, so £45k for four nodes ie. one appliance (this is approx – don’t quote!);

- Supermicro FatTwin chassis (inc 10GB NICs) £3500 (one chassis for all four nodes)

- 2 x E2620 CPUs £400 each

- 12 x 16GB DIMMs (192GB RAM) = £2000

- 400GB Enterprise SSD = £4500 (yep!)

- Three 1.2TB 10k rpm SAS disks = £600 x 3 = £1800

- …plus power supplies, sundries

- Software. List pricing is approx £11k per node plus vCenter, so a shade under £50k

- vCenter (vCSA) 5.5 = £2000

- vSphere 5.5 = £2750 per socket = £5500 per node

- VSAN v1 = £1500 per socket = £3000 per node

- Log Insight = £1500 per socket = £3000 per node

- Support and maintenance for 3 years on both hardware and software – approx £15k

- Total cost: £110,000

Once pricing is announced by the partners we’ll see just how much of a premium is being charged for the simplicity, automation, and integration that’s baked in to the EVO:RAIL appliance. There are of course multitudes of pricing options – you could just buy four commodity servers and an entry level SAN but there’s not much value in comparing apples and oranges (and I only have so much time to spend on this blogpost).

UPDATE 1st Dec 2014 – Howard Marks has done a more detailed price breakdown where he also compares a solution using Tegile storage. Christian Mohn also poses a question and potential ‘gotcha’ about the licencing – worth a read.

UPDATE May 2015 – VMware has introduced a ‘loyalty’ program to allow use of existing licences.

Competition

VMware aren’t the first to offer a converged appliance – in fact they’re several years behind. The likes of VCE’s vBlock was first back in 2010 and that was followed by the hyperconverged vendors like Nutanix and Simplivity. As John Troyer mentioned on vSoup’s VMworld podcast, Scale Computing use KVM to offer an EVO:RAIL competitor at cheaper prices (and have done for a few years). Looking at Gartner’s magic quadrant for converged infra it’s a pretty crowded market.

Microsoft recently announced their Cloud Platform Services (Cloud Pro thoughts on it) which was developed with Dell (who are obviously keeping their converged options wide open as they’ve also partnered with Nutanix and VMware on EVO:RAIL). While more similar to the upcoming EVO:RACK it’s another validation of the direction customers are expected to take.

Final thoughts

From a market perspective I think VMware’s entry into the hyperconverged marketplace is both a big deal and a non-event. It’s big news because it will increase adoption of hyperconverged infrastructure, particularly in the SMB space, through increased awareness and because EVO:RAIL is backed by large tier 1 vendors. It’s a non-event in that EVO:RAIL doesn’t offer anything new other than form factor – it’s standard VMware technologies and you could already get similar (some would say superior) products from the likes of Nutanix, Simplivity and others.

Personally I’m optimistic and positive about EVO:RAIL. Reading the interview with Dave Shanley it’s impressive how much was achieved in 8 months by 6 engineers (backed by a large company, but none the less). If VMware can address the current limitations around management, integration, and flexibility, while maintaining the simplicity it seems likely to be a winner.

Pricing for EVO:RAIL customers will be key although not all of the chosen partners are likely to compete on price.

UPDATE: April 2015 – a recent article from Business Insider implies that pricing is proving a considerable barrier to adoption for EVO:RAIL.

UPDATE: July 2015 – VMware have now offered extra configurations to allow double the VM density.

You can see our roundtable discussion on hyperconvergence at Tech Field Day Extra (held during VMworld Barcelona) below;

Further Reading

Good post by Marcel VanDeBerg and another from Victoriouss

Mike Laverick has a lot of useful material on his site, but as the VMware Evangelist for EVO:RAIL you’d expect that right! The guys over at the vSoup Podcast also had a chat with Mike.

A comparison of EVO:RAIL and Nutanix (from a Nutanix employee)

Good thoughts over at The Virtualization Practice

VMworld session SDDC1337 – Technical Deep Dive on EVO:RAIL (requires VMworld subscription)

Microsoft’s Cloud Platform System at Network World – good read by Brandon Butler

UPDATED 9th Dec – Some detail about HP’s offerings

UPDATED 16th Dec – EVO:RAIL differentiation between vendors

UPDATED 1st May – EVO:RAIL adoption slow for customers

Summary: Powerline adaptors are better than they used to be but they aren’t without their problems.

Summary: Powerline adaptors are better than they used to be but they aren’t without their problems.

There’s no point in rediscovering the wheel so I’ll simply direct you to Julian Wood’s excellent series;

There’s no point in rediscovering the wheel so I’ll simply direct you to Julian Wood’s excellent series;